From managing finances to communicating with customers and employees, the importance of IT in running your company is fairly self-evident – as you wouldn’t be able to perform your core business operations without it.

But with technology advancing at such a rapid pace, it can be difficult to keep up with the latest trends and updates.

In this blog, we will discuss the importance of staying up to date with technology and the benefits it can bring to your company.

The Importance of IT in Modern Businesses

The importance of technology in business is more apparent than ever in today’s day and age – it offers faster and much more efficient ways to solve your business problems while reducing operational costs in the long run.

But keeping up with the ever-evolving landscape of technology requires a high level of expertise that you may not have access to internally. Not to mention that managing your own technology can be time-consuming and may take time away from other important business tasks.

This is why managed IT services have become a popular option in recent years as a means for staying up to date with technology and access resources at a more advanced level and affordable cost.

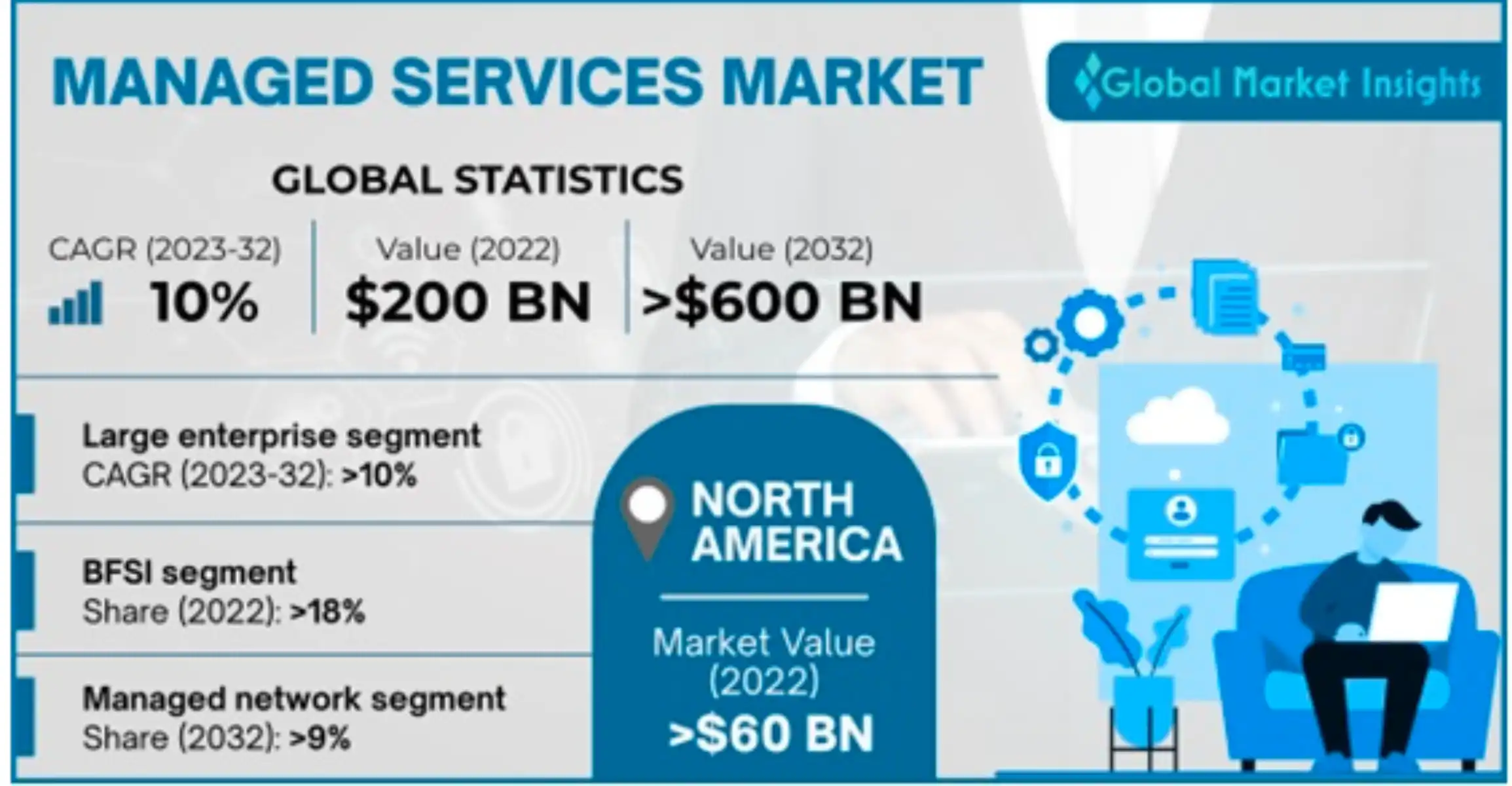

Managed service providers (MSPs) have become so popular in recent years that the industry is projected to expand at a compound annual growth rate (CAGR) around 10% from 2023 to 2032.

Image Credit: Global Market Insights

Let’s take a look at the importance of IT upgrades in propelling your business forward and how a managed tech company can help!

10 Key Reasons Why Staying Up-to-Date with Technology is Imperative for Success

1. Enhanced Security

With the rise of cybercrime, hackers are constantly finding new ways to exploit vulnerabilities in outdated systems. SMBs are the most vulnerable due to a lack of limited resources, with 43% of all cyber attacks targeting organizations with fewer than 1000 employees.

By keeping your software and hardware up to date, you are significantly reducing these risks.

Updates often include security patches and bug fixes that address vulnerabilities that have been discovered. By failing to update, you are leaving your business open to potential threats, which can have serious consequences for your reputation and bottom line.:

|

Check out these additional resources to learn more about the importance of cybersecurity: |

2. Enhanced Efficiency

Another reason to stay up to date with technology is to ensure optimal performance. As technology advances, new and improved features become available, which can enhance productivity, streamline processes, and ultimately save you time and money.

Updates can also improve the speed and functionality of your systems, making them more reliable and efficient.

If your business relies on software or hardware that is outdated, you may experience slow performance, crashes, and other issues that can impact your ability to operate effectively.

3. Improved Scalability

New technology solutions offer more flexibility in terms of how and where work can be done.

By keeping up to date technology, you can streamline processes, reduce costs, and easily scale up or down on your resources as needed. This can help when handling an increase in demand as your business grows, without needing to hire additional staff or invest in additional resources.

4. Competitive Advantage

By keeping your technology up to date and embracing new technology trends, you are opening your business up to a significant competitive advantage.

This is because technology can provide your business with real-time data and insights, helping you make more informed decisions about your operations and growth strategies.

5. Improved Customer Experience

Technology can help businesses provide a better customer experience, through personalized interactions, faster response times, and more efficient processes. This can in turn increase customer loyalty and satisfaction, giving you a leg up in the marketplace.

6. Cost Savings

While it may seem counterintuitive, maintaining up to date technology can actually save you money in the long term.

How exactly?

- By ensuring optimal performance, you can avoid costly downtime and repairs

- By taking advantage of new features and applications, you’re able to streamline processes and reduce overhead costs

- By staying ahead of potential security threats, you can avoid the costly fallout from a data breach or cyber-attack

Mitigate Cyber Threats & Streamline Business Efficiency

Get your technology assessment today

7. Increased Collaboration

Staying up to date with technology can play a crucial role in increasing team member collaboration in your business.

Collaboration software can allow team members to work together on the same project, even if they are in different locations. The latest technology tools can also automate repetitive tasks, allowing team members to focus on more strategic work, thus increasing their overall productivity.

8. Improved Analytics

Staying up to date with emerging technology can have a significant impact on analytics, improving the accuracy, speed, and depth of data analysis.

With features like real-time data insights and visualization, these tools can analyze vast amounts of data quickly and efficiently, providing you with a deeper understanding of customer behavior, market trends, and business operations.

9. Attracting Talent

Having up-to-date technology can make a business more attractive to job seekers, especially younger generations who are accustomed to using the latest tools and software.

The younger generation is highly tech-savvy and accustomed to the latest technologies, so they look for employers who offer the latest technology to support their work. Businesses that invest in modern technology demonstrate that they are committed to growth, innovation, and keeping up with the latest trends.

10. Future Proofing

Over 92% of G2000 companies outsource their IT management as a way to stay on top of tech trends and streamline productivity.

By leveraging the latest technology, your company can position itself for long-term success and stay ahead of the competition in a rapidly changing business environment.

How Do I Stay Up to Date with Technology?

So, how can you ensure that you stay up to date with technology and offer your business the best opportunity for growth and efficiency?

It’s easy with these simple steps!

- Invest in technology procurement services

- Complete infrastructure assessments

- Schedule regular updates

- Strategize accordingly

- Stay up to date with tech news and trends on social media

Ensure You Have the Most Up-to-Date Technology with Protek Support as Your MSP

As a member of the local tech community for over 11 years, Protek offers proactive IT asset management to help you stay up to date with the latest developments in technology and streamline your core business processes.

Track your vital IT assets and identify areas for improvement with our support. Learn more about how we can assist you in enhancing your tech environment!